Written By: Natalia Alvarez Rodriguez

At Georgia Tech EcoCAR, testing and validation are at the core of everything we do. They shape the safety, reliability, and real-world performance of our vehicle while preparing our team for competition and hands-on engineering challenges. Just as importantly, these processes protect our team members as they work with the Cadillac LYRIQ. Ultimately, our goal is simple: to build a vehicle people can trust.

Our Cadillac LYRIQ, “Big Mike” is designed for people. What is a car’s purpose if not to move people in a vehicle that feels smooth and enjoyable to ride in? Testing ensures our systems are safe, but it also pushes us to become better engineers and communicators by showing us exactly where we can improve. The data we gather shows us what works and what doesn’t yet.

To illustrate how we test, we will focus on our Adaptive Cruise Control (ACC) strategy, which we prepared to test at the EcoCAR Dynamometer Testing Event.

We began by developing the first version of our Adaptive Cruise Control algorithm. “This initital version was foundational. It established baseline functionality and helped us understand how our system responded to different simulated scenarios” Paul Barsa, CAVs lead said.

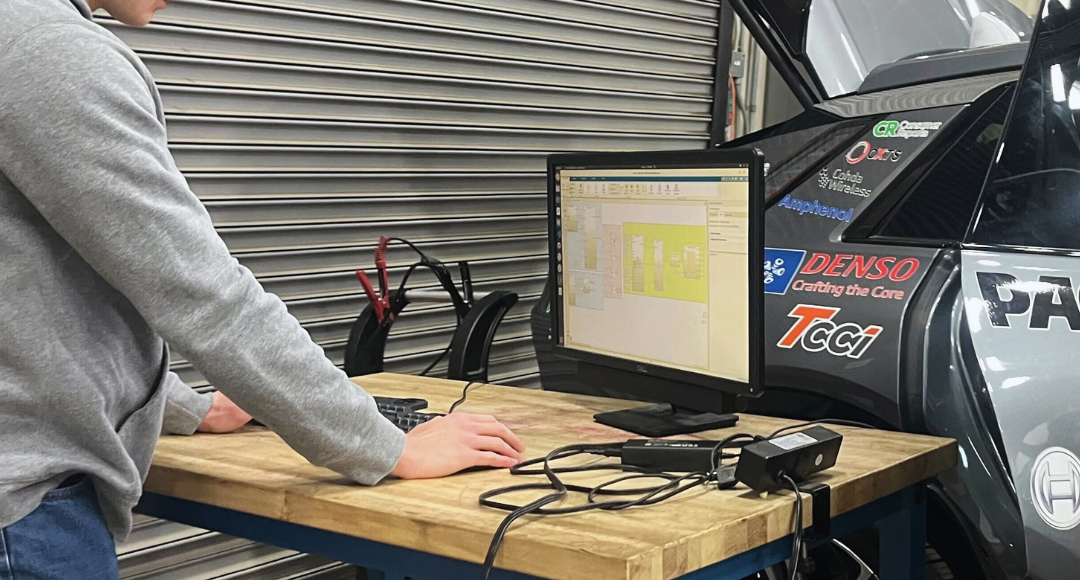

Next, we used Argonne National Laboratory’s RoadRunner platform to run our ACC algorithm against the competition-provided scenario in both Model-in-the-Loop (MIL) and Hardware-in-the-Loop (HIL) environments. In MIL testing, we validate our control logic in a fully simulated software environment, and in HIL testing, we integrate real vehicle hardware components into the simulation environment. This bridges the gap between pure simulation and on-road testing, helping ensure our systems behave correctly when interacting with physical components.

This allowed us to validate logic and performance before testing the full vehicle on the road. Though as our vehicle evolved, we eventually became able to conduct physical testing at the Georgia Public Safety Training Center, where we ran the scenario on a private track. This particular run was not for competition points, but it was critical for understanding how our algorithm translated from simulation to the real world.

After validating in controlled environments, we moved to highway testing with a lead vehicle. These real-world tests exposed areas where our ACC system could improve responsiveness and smoothness. As a result, we rewrote portions of the algorithm to make it more reactive and refined its behavior under dynamic conditions.

Following additional highway refinement, we ran the event for competition points at the EcoCAR Dynamometer Testing Event. This marked a milestone for our team: the first time we conducted a CAVs test on the dynamometer. Translating an automated driving scenario to the dyno required careful calibration, coordination, and strong validation work across subteams.

Now, we are focused on hyper-optimization. We are fine-tuning our system to maximize both performance and efficiency and present our very best ACC at the Year 4 Competition.

Testing is iterative. It requires humility, patience, attention to detail, and an openness to return to something that isn’t perfect yet. Ultimately, it is not just about earning points — it is about building systems we are proud of: systems that are safe, reliable, and ready for the real world.